74% concerned about the unethical use of AI: How businesses can remain transparent

share on

Artificial intelligence has quickly and steadily wormed its way into our day-to-day lives with many people using it for work, to plan trips, to create recipes and to handle big projects. However, just because its presence and use are ubiquitous, does not mean that it is automatically trustworthy.

A recent survey by Salesforce in Singapore reinforced the idea that human involvement is imperative in securing customer trust, with 89% of surveyors saying it is important for them to know whether they are communicating with AI or a human. 74% added that they are concerned about the unethical use of AI while 63% feel that generative AI will lead to unintended consequences for society.

Don't miss: 73% of consumers trust content by generative AI: Here's why they shouldn't

It seems like the fear of AI is as prominent as AI itself. However, with rising costs, generative AI holds tremendous promise for businesses to get through these challenges. This is why, it is all the more necessary for companies to use AI and to do so carefully.

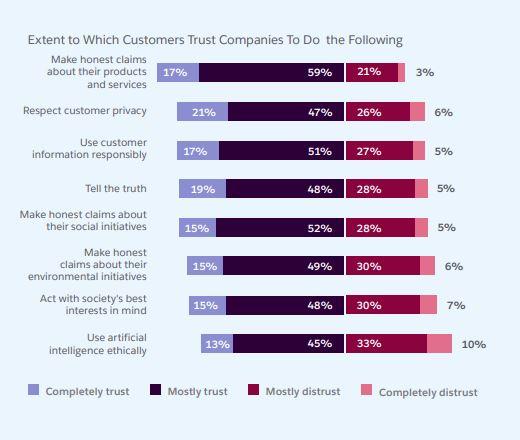

The main concern regarding generative AI, the report stated, is that most people are concerned about the implications of generative AI on data security, ethics and bias. To combat this, companies can focus on transparently communicating how they use AI and making it clear that their employees – not technology – are in the driver’s seat, it said. “For instance, a mere 37% of customers trust AI’s outputs to be as accurate as those of an employee. Accordingly, 81% want a human to be in the loop, reviewing and validating those outputs,” the report added.

The desire for human connection is founded on the belief that customers have more faith that they will be attended to. Nearly half the surveyors, the report explained, are willing to pay extra for better customer service, underscoring the importance of customer experience even in an age of price sensitivity. “77% of customers expect to interact with someone immediately when they contact a company,” it added.

Factors to deepen consumer trust in AI

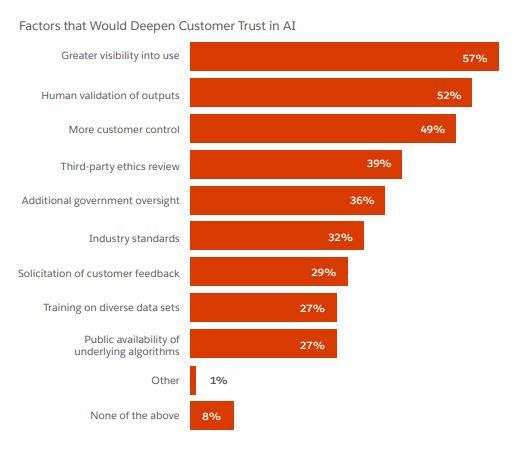

As companies continue to integrate AI across their businesses, they should also deepen trust by focusing on areas customers define to be priorities. Transparency, the report said, is the foundation of what consumers are looking for. Over half of the surveyors said that greater visibility into how AI is applied would boost their trust. Human validation of AI's outputs follows closely, just ahead of where and how AI is applied.

According to Leonard Lee, the president of Beyond Limits APAC, ways for organisations to build trust before adopting generative AI include establishing and adhering to an internal governance framework which considers enterprise-wide risks across use cases. This means putting in place policies and procedures for assessing and mitigating bias, data privacy and security risks and ensuring an efficient identification and correction of compliance issues.

"As security and privacy concerns continue to mount, companies, particularly those new to generative AI, should exercise caution and ensure that the technology is ready for implementation, given the vast amounts of data required," he added. Moreover, with the increasing use of scraped data, biases can unintentionally infiltrate generative AI models, making it all the more essential to be vigilant about data integrity.

Don Anderson, the founder and CEO of Kedaddle said that being too trusting of AI comes with many downfalls. If consumers become too trusting of AI, there is a risk of letting it guide all the facets of our lives.

"At this stage, the bigger tech players and platforms are in a full-out war to win the AI showdown. They have the most resources to arm themselves in this AI war, and they are moving far faster than any government regulatory arm could ever keep pace with," he said.

Along with fear of its entrenchment in our society, there is also a greater resentment of the technological phenomenon. "No question that we have anger and depression in the mix, as demonstrated by the current Hollywood Writers Guild strike over the AI threat to creative livelihoods, and protests that have occurred by workers in other parts of the world in recent months. Or the very recent example of a Malaysian radio station using an AI DJ," Anderson said.

"Expect more of this as the realities of the effect and impact of these technologies become clearer among populations. We’re in a bit of a starry-eyed ‘honeymoon phase’ with AI as what always occurs with the bright shiny toy of new technologies. We’re more captivated by the positive possibilities of its use than the negatives, which might explain in part the survey results," Anderson said.

Related articles:

73% of consumers trust content by generative AI: Here's why they shouldn't

Local radio station introduces Malaysia's first AI powered DJ

AI Singapore partners Run:ai in bid to increase AI adoption in SG by 2030

share on

Free newsletter

Get the daily lowdown on Asia's top marketing stories.

We break down the big and messy topics of the day so you're updated on the most important developments in Asia's marketing development – for free.

subscribe now open in new window